Final Year Project: Chinese Calligraphy Robot

May 2024 - Nov 2025

Technologies: Python, Tkinter, Threading, Hermite Curves, YOLOv8, ChatGPT API, OpenCV

Overview

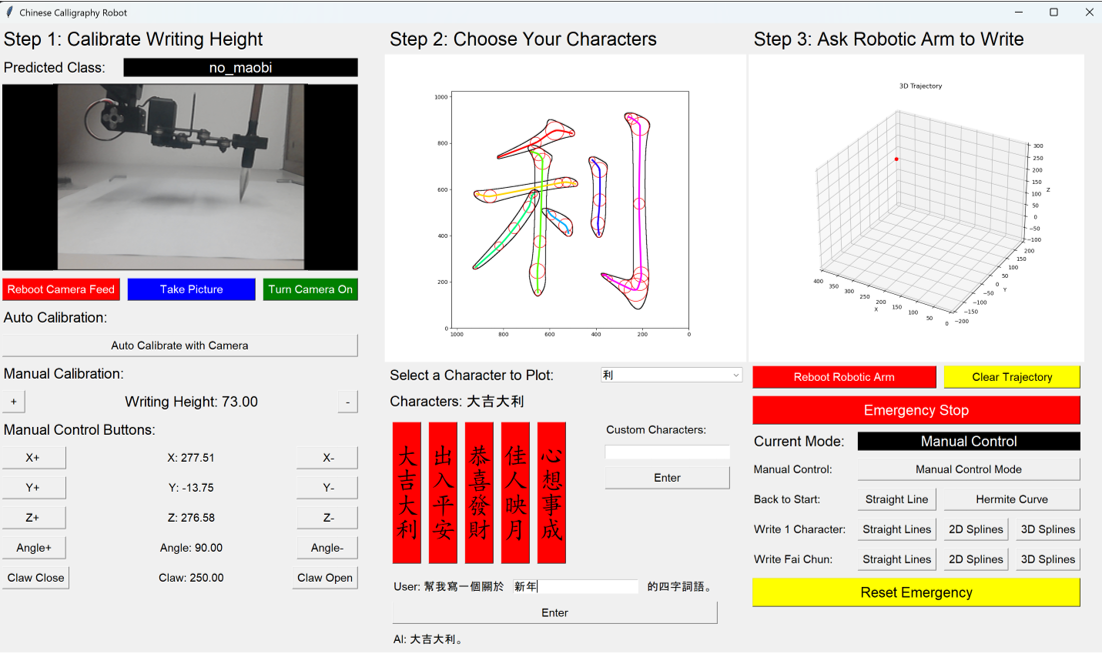

This project combines robotics, computer vision, and AI to create a system that writes authentic Chinese calligraphy using a 5-DoF robotic arm. The key innovation is a 3D Hermite curve-based path planning algorithm that modulates brush height to vary stroke thickness - a hallmark of traditional calligraphy. The system accepts natural language prompts, uses ChatGPT (via HKUST Azure API) to suggest four-character idioms, and generates corresponding brush trajectories. A computer vision module (YOLOv8) calibrates the optimal writing height and provides emergency stop functionality.

Awards & Publications

ISAM 2025

IEEE TENCON 2025

IET YPEC 2025

HKUST ECE FYP 2024-25

Technical Highlights

View Source on GitHub🤖 3D Path Planning

- Uses Hermite curves with modified Catmull-Rom spline interpolation for smooth trajectories.

- Brush height modulated via largest fitting circles algorithm - lower brush = thicker strokes.

- Supports 2D (fixed height) and 3D (variable height) modes for comparison.

🧠 AI Integration

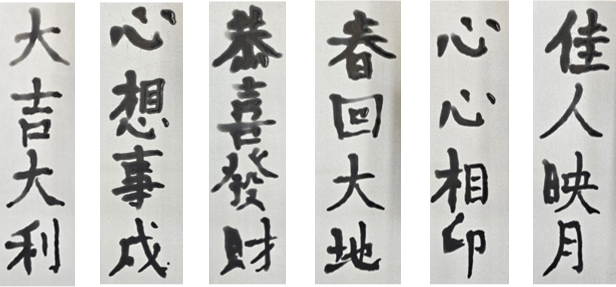

- ChatGPT (HKUST Azure API) generates four-character idioms (e.g., 大吉大利) from user prompts.

- YOLOv8 object detection model trained to detect brush bending, used for calibration and emergency stop.

- Dataset: 100+ annotated images of brush on paper, augmented with Roboflow to 2386 images.

⚙️ Software Architecture

- Multithreaded Python application with five concurrent threads: UI, control (FSM), JSON output, keyboard, camera.

- Non-blocking FSM handles modes: manual, single curve, single character, four-character phrase.

- Serial JSON commands to arm at 50 Hz with emergency stop.

📊 Dataset & Graphics

- Stroke data from Make Me a Hanzi - 1024×1024 SVG paths with medians.

- Conversion scripts extract control points and compute 3D height based on largest fitting circles.

- Test scripts generate visualisations (GIFs, 3D plots) for presentations.

Detailed Technical Explanation

1. System Architecture

The system comprises a 5-DoF Waveshare RoArm-M1 robotic arm, a 480p USB camera, a water-writing cloth, and a laptop running Python. The software is multithreaded: a Tkinter UI thread, a control thread (finite state machine), a JSON command thread to the arm, a keyboard input thread, and a camera thread. The arm is controlled via serial JSON commands (115200 baud) at 50 Hz.

2. Leveraging LLM for Inputting Chinese Characters in Robotic Calligraphy

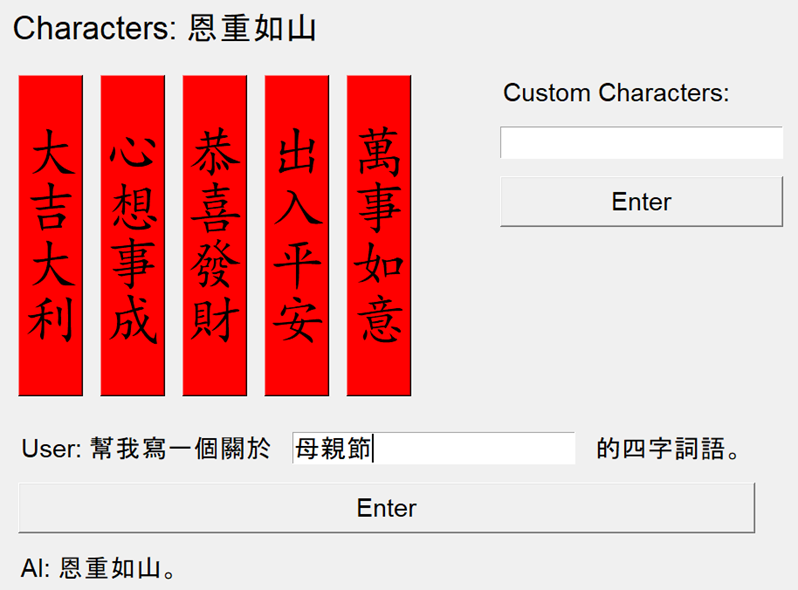

The system leverages the HKUST GenAI Platform API (ChatGPT-4o) to generate creative four-character idioms based on user-provided themes (e.g., "Mother's Day"). Examples include:

Input: 幫我寫一個關於 母親節 的四字詞語 → Output: 恩重如山

Alternatively, users can select from pre-defined four-character idioms by clicking the red banners

above

or input their own custom idiom in either traditional or simplified Chinese.

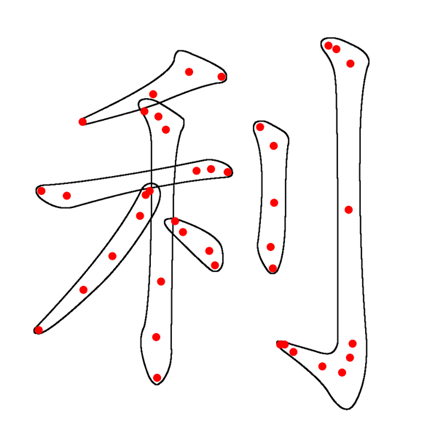

3. Fetch 2D Control Points

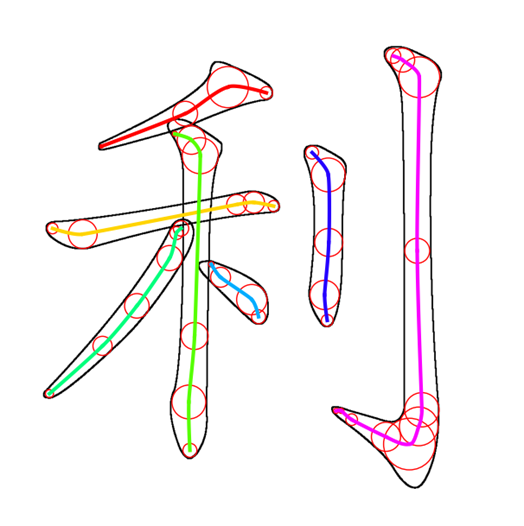

The open-source Make Me a Hanzi dataset provides stroke-ordered vector graphics, including medians(Red Control Points) and boundaries(Black lines) for each Chinese character. These 2D control points are extracted to represent the structure of the character strokes.

4. Convert 2D Points to 3D

Using the extracted 2D control points, the system computes the largest fitting circle for each median point that does not intersect the stroke boundary. The radius of the circle indicates the local stroke thickness, which is then used to calculate the Z-coordinate (depth). A larger radius corresponds to a thicker stroke, meaning the brush is pressed down further. The Z-coordinate is calculated using the following equation:

Here, r_avg is the dataset average radius (≈ 20), r_c is the current circle

radius, and

Z₀ is the calibrated base height (FYP report Section 2.2.2, TENCON paper Eq. 1).

5. 3D Path Planning with Hermite Curves

The core of the algorithm uses Hermite curves defined by start/end positions and velocities:

To connect multiple points in a stroke, a modified Catmull-Rom spline calculates velocities using the minimum of adjacent distances to prevent overshoot (FYP report Section 2.2.1, TENCON paper Eq. 4):

This ensures C¹ continuity and smooth trajectories even with unevenly spaced control points. The figure below shows a Hermite spline for the character "利" (from TENCON paper).

6. Connecting Strokes and Characters

Between strokes, a connecting Hermite curve lifts the brush with G¹ continuity: the starting velocity matches the previous stroke's end direction, and the ending velocity is straight down. This creates a natural lifting motion. Between characters, a similar curve translates the arm vertically and horizontally.

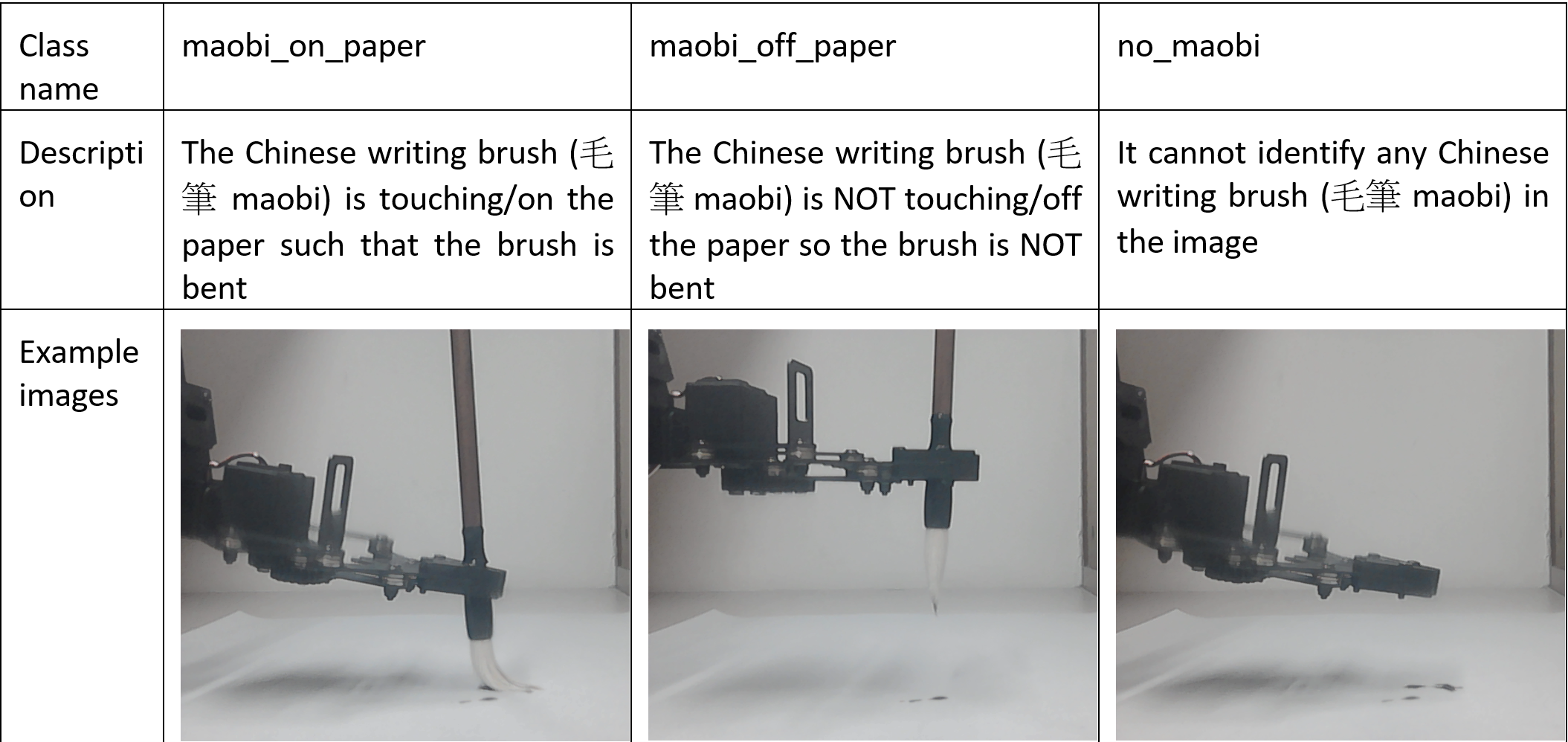

7. Computer Vision Calibration

A YOLOv8-based CNN classifies the brush state into three classes: maobi_on_paper,

maobi_off_paper, and no_maobi. The model was trained on 994 annotated images

(augmented to 2386) and achieves 94.5% validation accuracy using Roboflow. The

calibration routine

moves the arm down until the brush touches the paper, then up slightly to find the optimal Z₀.

8. Results & Performance

We then control the robotic arm to move in the simulated 3D path to write the Chinese Calligraphy.

The complete source code is available on GitHub.